Mellanox Technologies has long desired to be a major player in the Ethernet switching space, and with its forthcoming Spectrum switch ASICs and ConnectX-4 adapter cards, the company is positioning itself well to ride the next upgrade wave of Ethernet to roll into the datacenter.

At a gala event at the top of One World Trade in New York City, the top brass of Mellanox unveiled the new Spectrum chip and its companion ConnectX-4 Lx chip, both of which will offer significant bandwidth and latency advantages over the current crop of products running at 10 Gb/sec and 40 Gb/sec speeds.

The chips will be at the heart of new switches, sporting 50 Gb/sec and 100 Gb/sec switching, and adapter cards that deliver 25 Gb/sec links up from the servers. While Mellanox has not discussed any design wins with the Spectrum switch ASICs, the company is fielding a chip that will compete against the “Tomahawk” ASICs from Broadcom, which is in the process of being acquired by Avago Technology for $37 billion, and the XPliant chips that were created by a networking upstart by that name, which was acquired by Cavium Networks last summer for $90 million. No one is quite sure what Intel has cooking in regards to 50GE and 100GE on the network and 25GE on the server adapter, but clearly with the hyperscalers and cloud builders basically forcing the IEEE to adopt a standard that they wanted to better suit their power and money budgets, Intel cannot let this opportunity pass by.

Mellanox, which is perhaps best known for the InfiniBand switches and adapters that are commonly used in supercomputing clusters, cannot miss this opportunity to sell lots of chips or finished products to hyperscalers and cloud builders, either, and that is why it was one of the instigators – along with hyperscalers Google and Microsoft, rival chip maker Broadcom, and switch maker Arista Networks – founded the 25G Ethernet Consortium last July. Their argument, put simply, was that the 25 Gb/sec lanes that were developed to support the second generation of 100 Gb/sec switching should be geared down to server ports running at 25 Gb/sec and also allow for 50 Gb/sec switching as well. The argument was compelling, and within a few weeks, the IEEE backtracked from its initial rejection of the idea and embraced the 25GE efforts.

The movement towards adapters and switching using 25GE technology is also coinciding with the rise of open switches, which seek to break the network operating system free from the underlying switch, much as customers using X86 servers can choose Linux, Windows, or other operating systems for their machines. Cumulus Networks, Pluribus Networks, Pica8, and Big Switch Networks are all creating open switch operating systems, and they generally are supporting the current “Trident” and future “Tomahawk” families of switch ASICs to start because these chips, from Broadcom, have dominant market share in datacenter switching. Mellanox thinks that its Spectrum ASICs can give Broadcom and others a run for the money, which works out to be about a $7 billion opportunity for top-of-rack switching in the datacenter, Kevin Deierling, vice president of marketing at Mellanox, tells The Next Platform.

“We believe that 25G is the new 10G, 50G is the new 40G, and 100G is the present.” — Eyal Waldman, Mellanox CEO

At the moment, Mellanox is running its own Linux-derived MLNX-OS network operating system on the forthcoming Spectrum ASICs and it is working with Cumulus Networks, Juniper Networks, and others to port their networks to the chip, according to Mellanox CEO Eyal Waldman. Deierling was not at liberty to discuss any design wins that Mellanox has had with the Spectrum chips, and as for the uptake for Mellanox Ethernet chips among hyperscalers, Waldman reminded everyone that Mellanox has fielded prototype open switches based on its current SwitchX-2 chips, which do both Ethernet and InfiniBand, under the auspices of the Open Compute Project founded by Facebook. Intel and Broadcom did as well, and these were unveiled back in November 2013. Since that time, a number of companies, including Dell, Hewlett-Packard, and various original design manufacturers and their reseller partners have fielded open switches, too.

When the 25G Ethernet effort was started, the proponents of the standard believed that the resulting products would be aimed at hyperscalers, cloud builders, and other service providers who wanted more bandwidth between their systems and their top of rack servers, but they were not willing to go to 40 Gb/sec cards on their systems and 100 Gb/sec on their backbones just yet. We happen to think that while these very high-end customers, who operate warehouse-scale datacenters, will be the early and enthusiastic adopters of 25 Gb/sec adapters and 50 Gb/sec top of rack switches, the rest of the enterprise market will do the same math and in many cases will want similar setups. As was pointed out to us by Broadcom when it launched its “Trident-II+” Ethernet ASICs, which support a slew of 10 Gb/sec downlinks and 100 Gb/sec uplinks, back in May, only around 35 percent of the server ports are running at 10 Gb/sec speeds today, with more than 60 percent running at 1 Gb/sec speeds. Broadcom is betting a lot of enterprises are looking for a smoother, cheaper path to 10 Gb/sec for server connectivity, and it is probably right about that. But those who are running out of has on 10 Gb/sec – particularly those with heavily virtualization systems working on a budget or doing in-memory processing across clusters – will probably gravitate to 25 Gb/sec network links on the servers.

Pulling Apart Ethernet And InfiniBand

With the SwitchX family of chips, which launched a few years ago, Mellanox created a single chip ASIC that could support both Ethernet and InfiniBand network protocols as well as providing a Fibre Channel gateway. The idea was to sell different versions of the SwitchX chips initially on InfiniBand and Ethernet chips, and then to eventually offer a hybrid box that could support both protocols dynamically. This last bit never quite happened in a formal product line from Mellanox, and to our knowledge none of the customers of Mellanox who make their own switches or sell them to others ever made use of this dual-personality nature of the chips.

One important side effect of adding Ethernet on top of InfiniBand with the SwitchX chips is that the latencies for port hops when using the InfiniBand protocol actually went up compared to prior InfiniBand chips. It was not a lot, as we previously reported, but there were several years where it went up a bit and did not go down. With lower latency being a very big deal in the high performance computing segment – and increasingly with clustered storage and clustered databases – this increase in latency was a big deal. So late last year, Mellanox delivered its Switch-IB chips and went back to making ASICs specifically for InfiniBand and got the latencies going down on the right curve again.

With the Spectrum Ethernet ASICs that Mellanox has just unveiled, support for the RDMA over Converged Ethernet (RoCE) protocol, which brings some of the low-latency features of InfiniBand over to Ethernet, is important, but given the needs of high-end datacenters, lower thermals, lower cost per unit of bandwidth, and higher bandwidth are all much more important. And this is what the Spectrum design has focused on.

Like other switch ASIC makers, Mellanox does not provide die shots of its chips because that is giving away too much information to competitors, and it doesn’t talk much about the internals of the chips either. The SwitchX chips from April 2011 had 1.4 billion transistors and was implemented in a 40 nanometer process from foundry Taiwan Semiconductor Manufacturing Corp and delivered Ethernet ports running at 10 Gb/sec and 40 Gb/sec and InfiniBand ports running at 56 Gb/sec; the chip topped out at 4 Tb/sec of aggregate switching bandwidth with port-to-port hop latencies on the order of 170 nanoseconds to 220 nanoseconds depending on the protocol and workload. The SwitchX-2 follow-ons from October 2012 had the same basic feeds and speeds, but added some software-defined networking (SDN) functionality to the chip and enabled that Fibre Channel capability.

The Spectrum Ethernet chips are etched in 28 nanometer processes from TSMC, the same process that is used to make the Switch-IB chips announced last fall mainly aimed at supercomputing workloads. The Switch-IB InfiniBand ASICs topped out at 3.6 Tb/sec of aggregate bandwidth and 130 nanoseconds on a port-to-port hop. The Spectrum chip delivers 3.2 Tb/sec of non-blocking Ethernet switching bandwidth and under 300 nanosecond port-to-port latency. The ASIC supports the new hyperscale-driven speeds of 25 Gb/sec and 50 Gb/sec as well as 100 Gb/sec and also can geared down to 10 Gb/sec or 40 Gb/sec speeds when necessary. Deierling says that Mellanox will be stressing that its Ethernet chips do not drop packets, unlike the Ethernet chips from Broadcom have been shown to do in a recent report from The Tolly Group. That test pitted the SwitchX-2 from Mellanox against the Trident-II ASICs from Broadcom, which is useful for companies buying switches based on these chips. But the important comparison will be looking at packet losses on Spectrum and Tomahawk chips as they start shipping in products later this year.

The Spectrum ASIC will allow companies to create switches with a number of different configurations, including machines that have 32 ports running at 100 Gb/sec or stepped down to 40 Gb/sec for compatibility, or machines that support 64 ports running at 25 Gb/sec or 50 Gb/sec with step downs to 10 Gb/sec for compatibility. Other combinations are possible, such as 48 downlinks running at 25 Gb/sec and six ports running at 100 Gb/sec, says Deierling. Ports running at the higher speeds will be configurable with four-to-one cable splitters, allow for companies to buy faster ports now and hook to slower network cards now and then upgrade their servers at some later date with faster adapter cards.

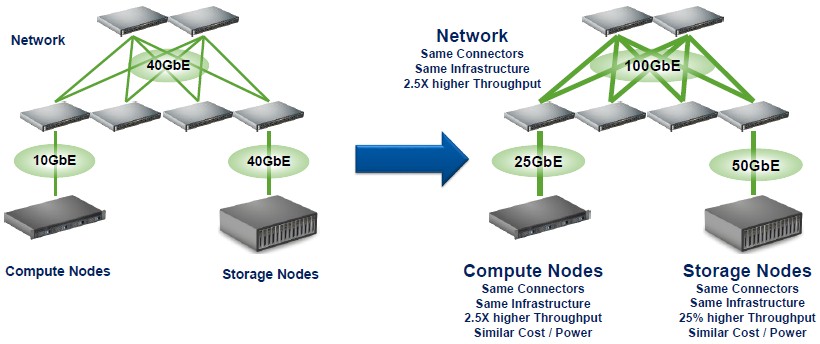

Mellanox clearly thinks that companies are ready to upgrade to faster networks. “We believe that 25G is the new 10G, 50G is the new 40G, and 100G is the present,” says Waldman. The important thing is that the 25 Gb/sec ports will use the same SFP cables that 10 Gb/sec ports use today, and the 50 Gb/sec and 100 Gb/sec ports will use the same QSFP cables that 40 Gb/sec switches have used. So this is what happens:

The upgrades on the servers and in the switches are about as painless as you can make it.

Mellanox will not just be selling the Spectrum ASICs to partners, but will continue to make and sell its own switches, too. While the company was only showing off two machines at its launch event, it has three distinct Spectrum switches in the works:

The half-width 16-port SN2100 units are interesting in that they will be able to provide redundant connectivity for racks of servers; imagine four hyperscale or HPC server enclosures with four nodes each, as is a common form factor linked to each pair of these half-width switches as a compute-network unit. With cable splitters, you could provide 64 redundant ports of downlinks running at 25 Gb/sec from 1U of rack space. Mellanox is also pitching these SN2100 switches for storage clusters and database clusters.

From the outside, the 32 port switches look like the existing 40 Gb/sec units from Mellanox, and that stands to reason. The top-end SN2700 is aimed at so-called multihost systems using cable splitters to provide 50 Gb/sec uplinks from servers. The SN2700 is also being pitched as an aggregation switch for 100 Gb/sec backbones or high-speed top of racks, and the SN2410 is a top of rack unit as well, but running at the slower 25 Gb/sec speed.

That brings us to adapter cards. At the Open Compute Summit earlier this year, Mellanox was showing off the ConnectX-4 server adapter, which had a single 100 Gb/sec port that could be carved up into a four virtual ports running at 25 Gb/sec. This network adapter card was actually part of Facebook’s “Yosemite” microserver, the social network’s second stab at the diminutive computing and one based on Intel’s Xeon D processor.

In a normal server, you usually have two and sometimes four sockets that are lashed together with a high-speed, point-to-point interconnect – in the case of the Xeon processors, it is the QuickPath Intercconnect, or QPI. Machines have a dedicated network card, and all four processors link out to the network through a single card generally, one with one or perhaps two ports. With the microserver approach that is likely to become more common, especially for certain workloads in hyperscale settings, multiple single-socket servers are linked over a midplane to a single, fast network adapter. The adapter has virtualization capabilities, and in the case of the ConnectX-4, the 100 Gb/sec of bandwidth is partitioned up into four 25 Gb/sec virtual ports, one for each server. This multi-host capability, says Waldman, cuts the number of cards you need by 75 percent and drops the cost by 45 percent compared to using two-port 10 Gb/sec adapter cards.

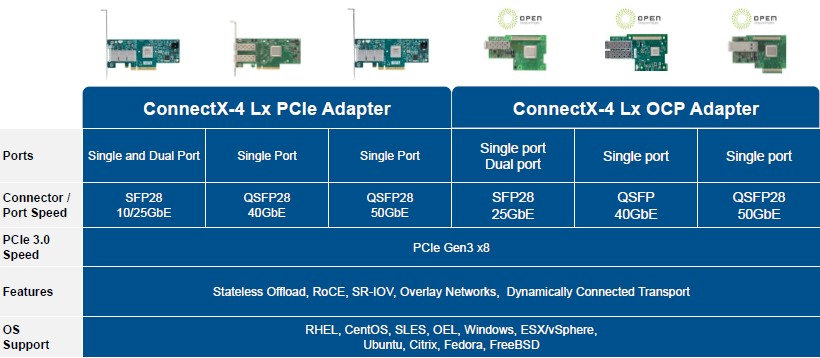

With the ConnectX-4 Lx card announced this week, Mellanox is gearing down the speeds on the adapter cards to 25 Gb/sec, 40 Gb/sec, or 50 Gb/sec, depending on the adapter model, and offering it with one or two ports.

The ConnectX-4 Lx adapter card with a single port running at 25 Gb/sec speeds has a suggest retail price of $500; they will be available this quarter. The Spectrum switches are due in the third quarter, and the top-end Spectrum SN2700 switch has a suggested retail price of $49,000, or $1,531 per port. This is lower than the aggressive pricing that Dell has promised with its Force10 S series switches using Broadcom’s Tomahawk ASICs. Dell was talking about pushing the price down below $2,000 per port when its 100 Gb/sec switches ship later this year.

Pricing is not available on all of the Mellanox switches as yet, and neither are all of the feeds and speeds, but Deierling says that a 25 Gb/sec port should cost somewhere between 1.4X and 1.5X of the cost of a 10 Gb/sec port. It looks to us, by that math, that a 100 Gb/sec port will cost around 3X the cost of a 25 Gb/sec port on the Spectrum switches. Similarly, a ConnectX-4 Lx card costs about 1.5X times that of the 10 Gb/sec ConnectX-3 cards they replace in the Mellanox line. In most cases, you are getting 2.5X the performance for around 1.5X the price.

This is a bargain that many enterprises – and not just hyperscalers and cloud builders – will go for.

25/50/100GbE looks like THE right technology.

But it should also be available at the right price – which so far seems highly uncertain since price/performance for new Mellanox products mentioned in this article is much worse (for $/Gbs) than those of already available 56GbE switches and NICs – at least 2 times worse for switches (vs SX1710) and 3 times worse for NICs (vs ConnectX-3 Pro EN).

It’s also completely unclear why Mellanox EDR (100Gbs) 36-port swiches already cost <500$/port retail but SN2700 is listed above $1,500 per port.

Hopefully real prices for those new switches/NICs will be better than for previous ones – but MSRP don't inspire much optimism.

This is a vaporware announcement. What a joke. It think it’s funny to listen to Eyal lie on TV. When asked about competition for Spectrum he flat out lied and said that the only competition is 10G end to end ethernet solutions. The truth is that Broadcom Tomahawk is already shipping in production.

There is not one major OEM on record to use this chip. Nobody will either. It will once again be Mellanox only. Even if the thing were to be usable, who wants to compete against your chip supplier. Mellanox will be the only user of this chip. It will get the same market traction as SwitchX-2, zero.

I don’t see any availability dates in any of the releases. It’s years out anyway. What a joke.