With the prices of flash storage coming down fast to meet a kind of parity with disk storage, and solid state memory having obvious throughput and energy savings benefits compared to spinning rust, you might think that disk drives would be pretty much dead out there on the public clouds. But that is not the case, as the new disk-heavy D2 instances from AWS demonstrate.

The two other big public clouds – Google Compute Platform and Microsoft Azure – have slightly different approaches to disk and flash storage, but they absolutely have mountains of disks in their datacenters and this does not look like it will change any time soon.

The day when one of the big clouds or hyperscalers goes all non-volatile for storage will be a big day – if it ever comes to pass. That disks are still a key component of storage for the hyperscalers and cloud builders is, as much as any other factor, probably a good indication that enterprise datacenters and supercomputer centers will also be dependent on disk storage for the foreseeable future. Disk drives still have their place.

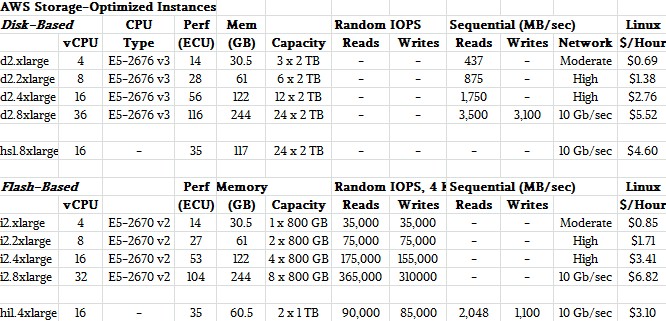

At Amazon Web Services, the company has a dizzying array of different instance types with varying levels of compute, memory, and storage to choose from. There are three levels of storage-optimized instances now that the new D2 types have launched this week.

Back in December 2012, AWS rolled out the High Storage 1 (HS1) instance, its first EC2 image type that had scads of local storage in the node for the instance to control. Importantly, so long as the storage-optimized instance was up and running, the storage associated with the instance stayed dedicated to it and the nodes in the HS1 instances could be clustered together on local, high-speed networks inside of the AWS datacenters to create persistent clustered file systems such HDFS, Gluster, and maybe even Lustre or to house data warehouses or seismic datasets for other kinds of big jobs with a heavy storage component. The HS1 instances came in one flavor, the hs1.8xlarge, with 16 virtual CPUs and 117 GB of virtual memory backed by two dozen 2 TB disk drives. This hs1.8xlarge instance had 35 ECUs of relative compute power on the Amazon scale, which means those 16 threads in the instance provided roughly 35 times the CPU oomph as a 1.7 GHz Xeon chip did in the original EC2 instances that came out in 2006.

The D2 instances are a follow-on to this one disk-heavy instance from more than two years ago, and they are more expensive than the HS1 instance, too. Apparently it has taken companies some time to warm up to creating their own clustered file systems on the public cloud, but if customers were not asking for this, Amazon would not build it and it certainly could not raise prices. (To be fair, the HS1 instance at $69 per TB per month, has a lot less compute and a little less memory than the most similar D2 instance, which costs $83 per TB per month by our math.) The thing is, nodes very much like these D2 instances are very likely underpinning the data warehousing and Hadoop services at AWS, and probably are to be found in parent company Amazon’s operations, too. So it is not a stretch to expose such storage-heavy instances to AWS customers.

Here is how the various storage-optimized instances stack up at AWS:

We have poured around through the manuals and spec sheets for the instances and as you can see, there are bits of data missing that would allow more accurate comparisons between the all-disk D2 and all-flash i2 instances. But we can certainly compare them all on the cost per unit of capacity and, if we assume a rate of around 250 IOPS for a 2 TB disk drive, cost for random read IOPS. If you do that math, then the HI1 all-flash instance from a few years back cost $1,116 per TB per month, and the I2 all-flash instances cost $767 per TB per month. So dedicated flash capacity for compute instances on the EC2 cloud costs 9.2X that of dedicated disk capacity. But if you turn it around and look at IOPS, the flash is a lot less costly. Looking at random read IOPS, the two smaller I2 instances cost around 2 cents per IOPS per month and the two fatter configurations cost about 1 cent per IOPS per month. (The HI1 instance was around 2 cents per IOPS per month.) But the D2 instances cost 66 cents per IOPS per month, a factor of 30X to 60X more expensive, depending on which ones you want to compare.

The high IOPS of the I2 instances make them ideal for MongoDB and Cassandra data stores and some online transaction processing jobs where low latency is important, says AWS.

The new D2 instances work best with a Linux kernel that has been tuned up to take advantage of a feature called persistent grants in the Xen hypervisor. (AWS uses a custom variant of Xen to carve up its physical servers into virtual machines.) AWS has its own Linux AMI 2015.03 supporting persistent grants, and Amazon machine images have been made for Canonical’s Ubuntu Server 14.04, Red Hat’s Enterprise Linux 7.1, and SUSE Linux’s Enterprise Server 12 to support this feature as well. The Haswell Xeon E5 processors in the D2 instances run at 2.4 GHz and can Turbo Boost up to 3 GHz if there is enough cooling capacity in the system. Amazon has activated the NUMA-aware memory placement features on the D2 instances, which increases the compute efficiency of the 18-core parts used in the largest D2 instance, and the C states and P states power management features of the Haswell chips are also exposed so developers can have direct control of these functions as they load code onto the D2 instances.

Incidentally, the D2 instances are tuned to talk to the Elastic Block Storage (EBS) service on AWS, and have dedicated throughput to EBS built into the prices above, ranging from a low of 500 Mb/sec for the smallest D2 instance to 4,000 Mb/sec for the largest D2 instance.

Many Ways To Mix Flash And Disk

Trying to juxtapose the capacity and performance of flash and cache is perhaps why Google Compute Platform does not offer separate flash and disk storage. As Tom Kershaw, director of product management for Google Cloud Platform, told us when explaining how its new Nearline Storage operates, the search engine giant has created a single storage fabric that mixes flash and disk and then has a software abstraction layer to provide different levels of performance, durability, and availability for that storage. Google does it implicitly with software, AWS does it explicitly with different instance types.

Microsoft’s Azure public cloud is somewhere in the middle, offering different data management services – SQL Server and Redis databases – as well as raw storage on either disk or flash that is then associated with Azure compute instances. The Azure disk storage comes in locally redundant, zone redundant, geographically redundant, and read-access geographically redundant protection levels, and is available in four different formats, which Microsoft calls block blobs, page blobs and disks, tables and queues, and files. The files service is in technical preview, and so is a variant of the page blobs and disk service that runs atop flash-only arrays. This latter bit is called Premium Storage and is available in Microsoft’s US West, East US 2, and West Europe regions, and Microsoft says it can deliver more than 50,000 IOPS per VM at under 1 millisecond of latency on its flash storage.

It will be interesting to see if AWS, Google, or Microsoft eventually offer flash capacity with inline compression, deduplication, snapshotting, and other features common in all-flash arrays as part of the services. With such capabilities, the cost of flash storage can be brought way down and make it more competitive with raw disk capacity. This is precisely what a slew of all-flash upstarts have done to carve out their place in the datacenter.

Today yes. The question is tomorrow. We have reason to believe that the 3D versions of NAND flash from Intel/Micron and maybe also Samsung are about to offer 3X better price/performance. Will that be enough to stop all the spinning? Maybe. There must be some number that would be good enough. My estimates are that somewhere between 5X and 10X, the spinning stops for good.

Do disks have better price/performance coming so they can stay ahead of flash? Well, they say so, but don’t look back fellas, something may be gaining on you.